First-party data in online advertising, part 2: How to collect it and send it to media systems

In the first part of our series on using first-party data in online advertising, we explained why first-party data is important for media targeting and improving campaign performance. In this part, we will take a closer look at how to collect first-party data correctly and send it to media systems. We will go through the full journey of first-party data, from the dataLayer through normalization and hashing to sending it via server-side Google Tag Manager (sGTM) to Google Ads and Meta. The goal is to give you a practical guide that you can implement right away, including code snippets, edge cases, and the places where things most often break in practice.

The good news is that sending first-party data to advertising systems is not technically difficult in itself. The catch is that the details that matter are hidden in normalization, hashing, and the exact way you package the data before sending it. If you know how to do it, it is a one-afternoon task. If you do not, you will spend far more time debugging why a hash does not match, why data is missing in the platform, or, in the worst case, you may not even realize there is a problem at all.

This article will save you both. We will go through the full technical flow, from collecting data in the dataLayer through normalization and hashing to sending it to Google Ads and Meta:

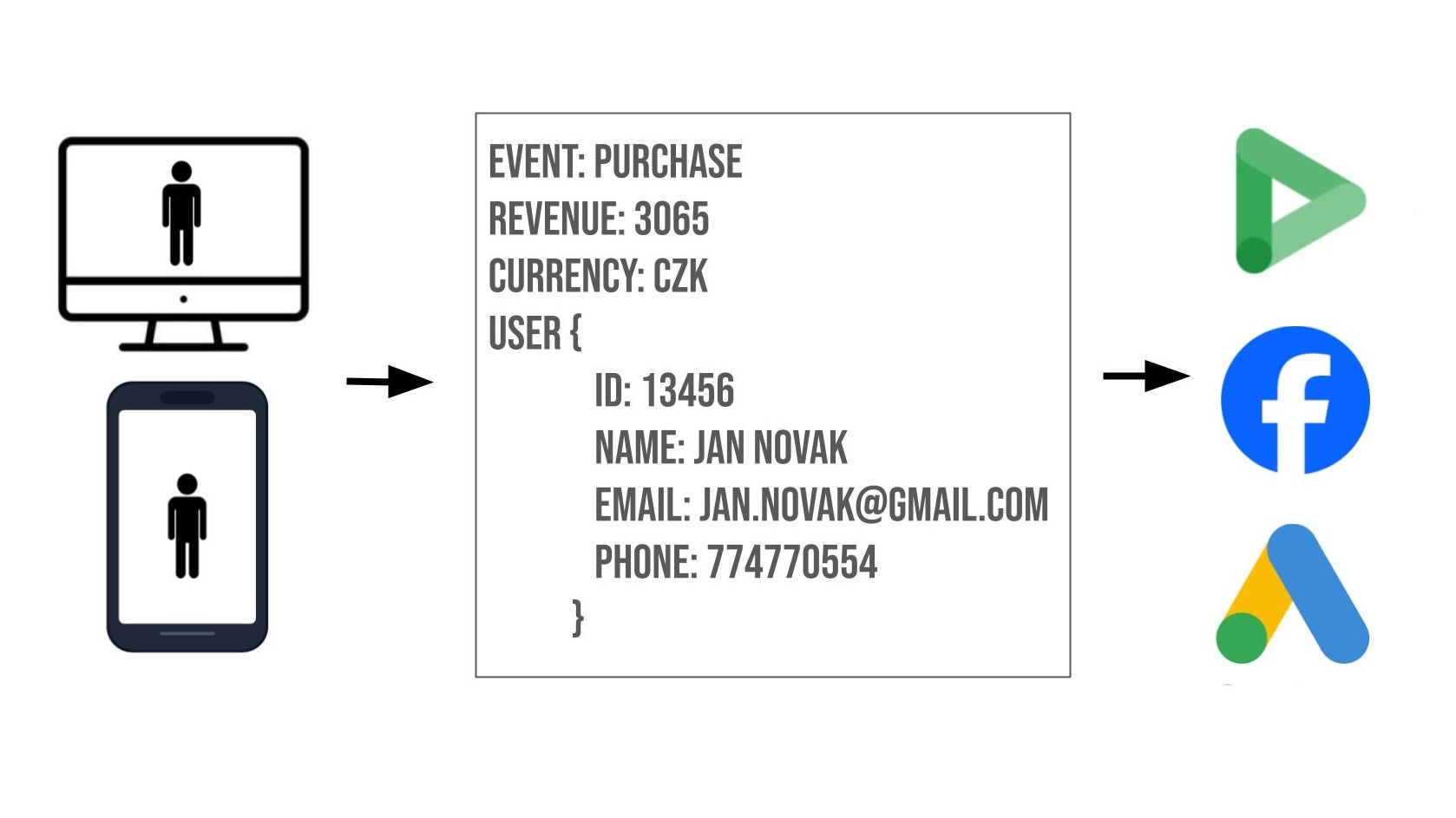

1. A user performs an action on the website, such as a purchase or form submission, during which they provide their personal data. The website pushes this data into the dataLayer object user_data.

2. The browser GTM container captures the dataLayer event and, for example, a GA4 tag, although other trackers and templates can also be used, sends the hit to your sGTM endpoint, not to Google servers, together with these details.

3. On the sGTM side, the hit is received by the GA4 Client, a special component in sGTM whose role is to recognize the format of incoming data and process it correctly. It receives the incoming payload and transforms it into the so-called Event Data Object, a structured data object. This object contains all fields sent from the browser, including the user_data object.

4. Then the relevant tags are triggered:

a. The Google Ads Conversion Tracking tag on a specific conversion event, such as purchase, automatically parses user_data from the Event Data Object, performs SHA-256 hashing of the fields in the user_data object, and sends the conversion hit to Google Ads servers. To be honest, the setup is actually hybrid: the Google Ads tag in sGTM returns an instruction in the HTTP response for the GA4 library in the browser to perform a redirect to the DoubleClick domain for third-party cookie stitching.

b. The Meta (Facebook) CAPI template, whether you use the official Meta one or, for example, the Stape.io template, also automatically takes user_data from the Event Data Object. Be careful here: it does this even if you do not set the data manually and directly.

Collecting user data: the dataLayer as the foundation

Collecting first-party data starts on your website, specifically at the moment when the user gives you their details: during registration, order submission, or newsletter sign-up. The standard approach is to push the data into the dataLayer. Even here, you can run into the first pitfalls.

A few key best practices:

- user_data must be part of the same push as the event, because GTM processes data in the context of a specific event. Data pushed separately may not be available when the tag is triggered.

- Before pushing new ecommerce data, the previous state or contents of the data layer should always be cleared: dataLayer.push({ ecommerce: null });. This prevents data from “leaking” from previous events.

- Even though Google documentation claims otherwise, it is not enough to add user_data only to the Google tag (the configuration tag). Add it to individual GA4 event tags as well, such as purchase, generate_lead, and so on. Several specialists agree that adding it only to the config tag is not always reliable.

- If a value from user_data is not available, simply omit the field. Never send a placeholder, such as an empty string, "unknown", "N/A", and so on. The systems will hash the placeholder and use it as a valid identifier, effectively merging all users without that value into a single profile. The result is distorted analytics both in advertising platforms and in GA4.

SHA-256 hashing

A fundamental element of first-party data implementation is hashing. In the world of advertising platforms such as Google or Meta, one specific algorithm is used as the standard: SHA-256. It is a one-way hashing algorithm that converts any input into a 64-character hexadecimal string. Google, Meta, as well as Reddit, X, Pinterest, TikTok, and others use SHA-256 to match first-party data against their database of logged-in users. The key decision is where to hash. On the client side, in the browser, or on the server side, in sGTM?

The good news: if you want to save yourself work and avoid unnecessary complications, from a practical perspective you do not have to handle hashing manually. All tags and templates for Google Ads and Meta Pixel/CAPI perform hashing automatically, whether in the browser or on the server. The Google Ads tag automatically hashes user_data fields before sending them, and the Meta CAPI tag, both the official Facebook template and the Stape template, does the same. In addition, they can detect whether the data is already hashed, and in that case they do not hash it again.

However, the question is not only technical, but also security-related. Do you want to entrust the protection of sensitive data to an automated script from Google or Meta? If not, there are essentially three possible levels of protection:

1. Hash the data before sending it to the data layer, so the raw email or phone number never appears in the browser.

2. Omit personal data in the browser and send it from your CRM, then hash it using a function in server-side GTM.

3. Hash the data on your own server or backend before sending it to sGTM.

Each of these options has different implementation requirements and a different security impact. We will cover the question of when and where to hash in more detail in one of the next parts of this series.

Data normalization before hashing

Normalization is the step that determines whether your data will be useful at all. A poorly normalized hash will not match the platform’s database, and the conversion will not be attributed. In addition, Google and Meta have different rules in several areas, so one function for both is not enough.

Google documentation: https://developers.google.com/google-ads/api/docs/conversions/upload-offline#prepare-data

Meta documentation: https://developers.facebook.com/docs/marketing-api/conversions-api/parameters/customer-information-parameters/#formatting-the-user-data-parameters

Both platforms require conversion to lowercase and removal of leading and trailing spaces, meaning trim. The key difference is how Gmail addresses are handled. Google Ads Enhanced Conversions requires dots to be removed from the username part of addresses on gmail.com and googlemail.com, as well as removing everything after the + sign, meaning plus-addressing. Meta CAPI does not require this. Its documentation explicitly states only trim and lowercase. Removing dots from a Gmail address for Meta would result in an incorrect hash.

Phone number

Both platforms require a format with a country prefix:

- Google requires E.164 format with a leading +.

- Meta requires practically the same thing, but without the + sign, only digits including the country code.

No spaces, hyphens, or brackets. Always include the country code, even if all your data comes from one country.

First and last name

Both platforms: lowercase, without punctuation. Meta additionally requires removing special characters, but Czech diacritics are OK and should be preserved.

AdDresS

Meta hashes all address fields, meaning city, state, ZIP code, and country, while Google hashes only first name, last name, and street address. City, region, postal code, and country are sent in plaintext. For Google, the country must be in ISO 3166-1 alpha-2 format, such as CZ, SK, DE, while Meta expects lowercase, such as cz, sk.

How should you normalize?

Ideally, normalization should already happen on the website, meaning your developer should send normalized, and potentially already hashed, personal data directly into the data layer. If that is not possible for some reason, you can write your own normalization function in GTM. It might look something like this:

Google:

function normalizeEmail(email, platform) {

email = email.trim().toLowerCase();

if (platform === 'google') {

const [user, domain] = email.split('@');

if (['gmail.com', 'googlemail.com'].includes(domain)) {

let cleanUser = user.replace(/\./g, '');

if (cleanUser.includes('+')) {

cleanUser = cleanUser.substring(0, cleanUser.indexOf('+'));

}

return cleanUser + '@' + domain;

}

}

return email;

}

function normalizePhone(phone, platform) {

let digits = phone.replace(/[\s\-\(\)]/g, '');

if (!digits.startsWith('+')) digits = '+' + digits;

// Meta: without +

return platform === 'meta' ? digits.replace('+', '') : digits;

}

Meta:

function normalizeEmailMeta(email) {

return email.trim().toLowerCase(); // no gmail dot-stripping

}

function normalizePhoneMeta(phone) {

let digits = phone.replace(/[\s\-\(\)]/g, '');

if (!digits.startsWith('+')) digits = '+' + digits;

return digits.replace('+', ''); // no +: 420234567890

}

Specific requirements for Google Ads

Minimum requirement: at least one identifier, meaning email, phone, or a complete address: first name + last name + postal code + country. If you send only first name and last name, it will silently fail, so it is better not to send an incomplete address.

Implement fallback logic: if a phone number is not available, send at least an email. If you have neither, the conversion will still be sent. The platform will not match it, but it may count it as an unattributed conversion and use modeling.

Specific requirements for Meta Ads

Meta hashes all customer information fields: email, phone, first name, last name, city, state, ZIP code, and country. In Meta Ads, your events receive an Event Match Quality (EMQ) score, which is Meta’s score on a scale from 0 to 10 that evaluates matching quality.

Your goal should be to reach 6+ for key conversion events, ideally 8+ for purchase. Adding a hashed email brings roughly +4 points, while a phone number adds about +3 points. It is better to send fewer accurate, correctly normalized parameters than many poorly processed ones.

Setup in GTM and sGTM

Prerequisites

- First-party data in the data layer

- A GTM container deployed on the website

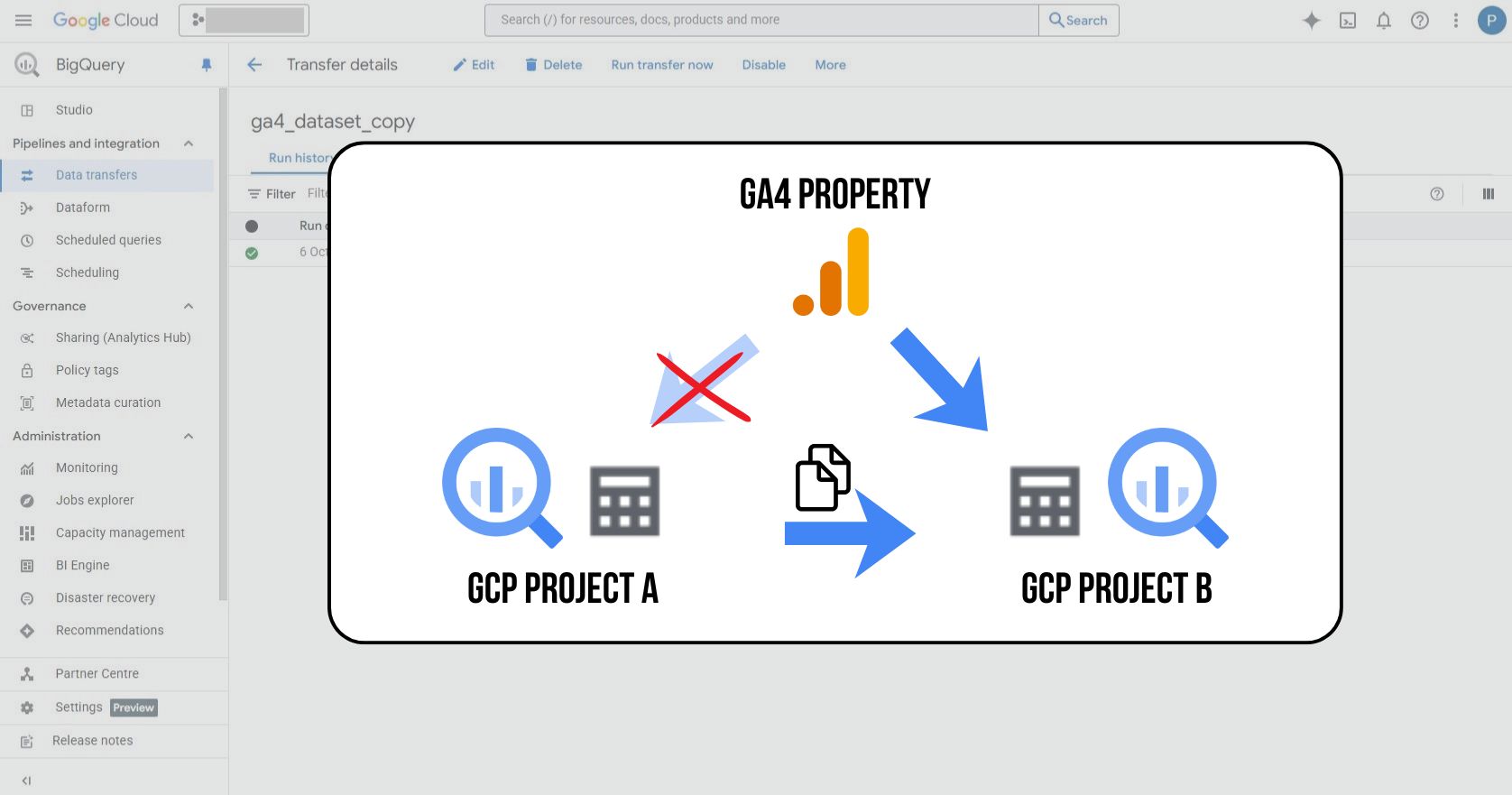

- Server-side measurement with sGTM set up, either through your own server in GCP or a third-party provider

1. Assume you have data deployed in the data layer with the purchase event

window.dataLayer.push({

event: 'purchase',

ecommerce: {

transaction_id: '78342916504217',

value: 249,

tax: 43.21,

shipping: 0,

currency: 'CZK',

coupon: '',

items: [

{

item_id: 'ALZMNTB01',

item_name: 'Alzament PLA Basic 1kg Black',

item_brand: 'Alzament',

item_category: 'Počítače a notebooky',

item_category2: '3D tisk',

item_category3: 'Filamenty pro 3D tiskárny',

price: 249,

quantity: 1,

index: 0

}

]

},

new_customer: true,

customer_type: 'new',

user_data: {

email: 'jan.novak@example.cz',

phone_number: '+420731458920',

address: {

first_name: 'Jan',

last_name: 'Novák',

street: 'Hlavní 456',

city: 'Brno',

region: 'Jihomoravský kraj',

postal_code: '602 00',

country: 'CZ'

}

}

});

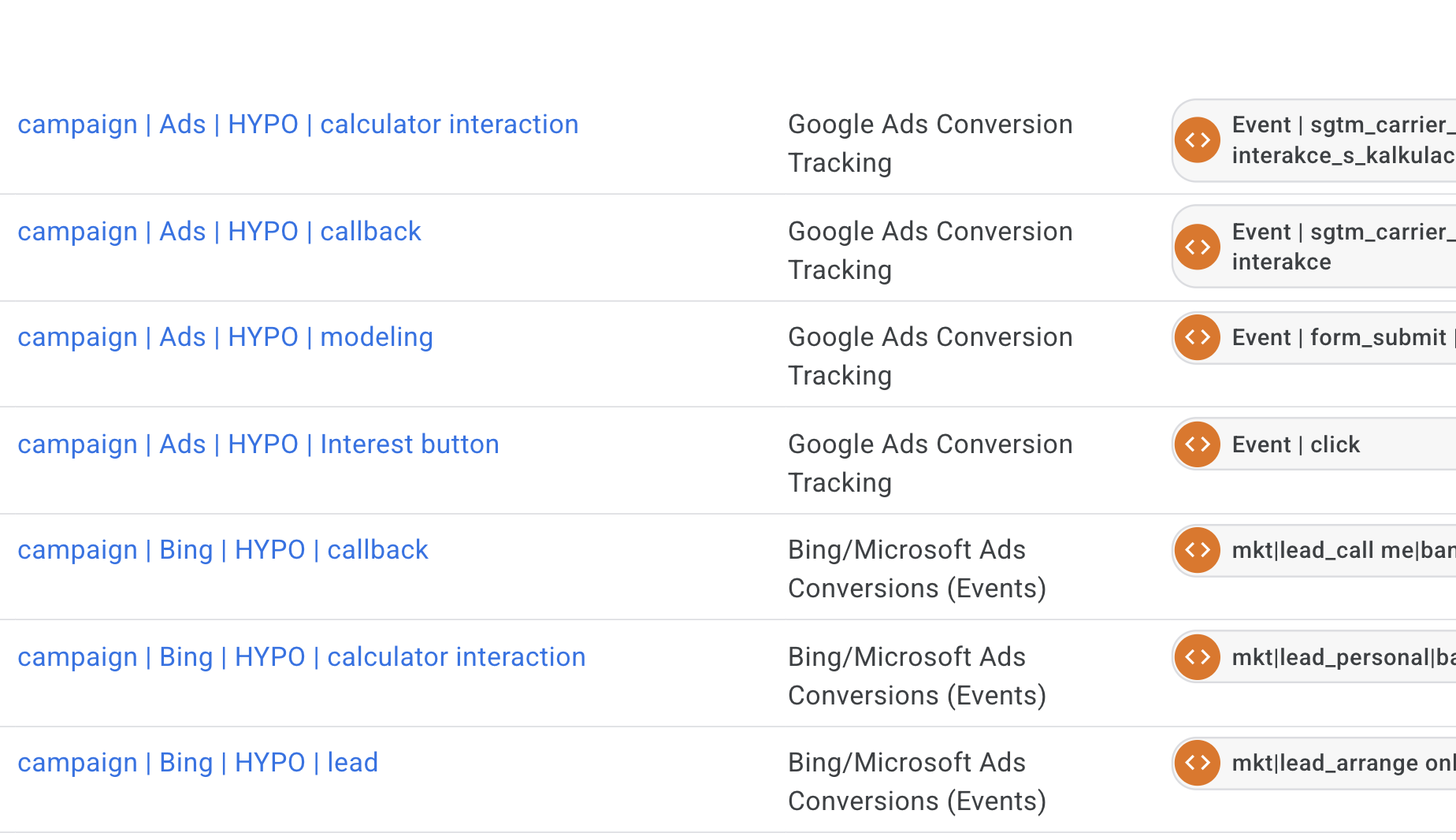

2. In GTM, create a Data Layer Variable for each data point

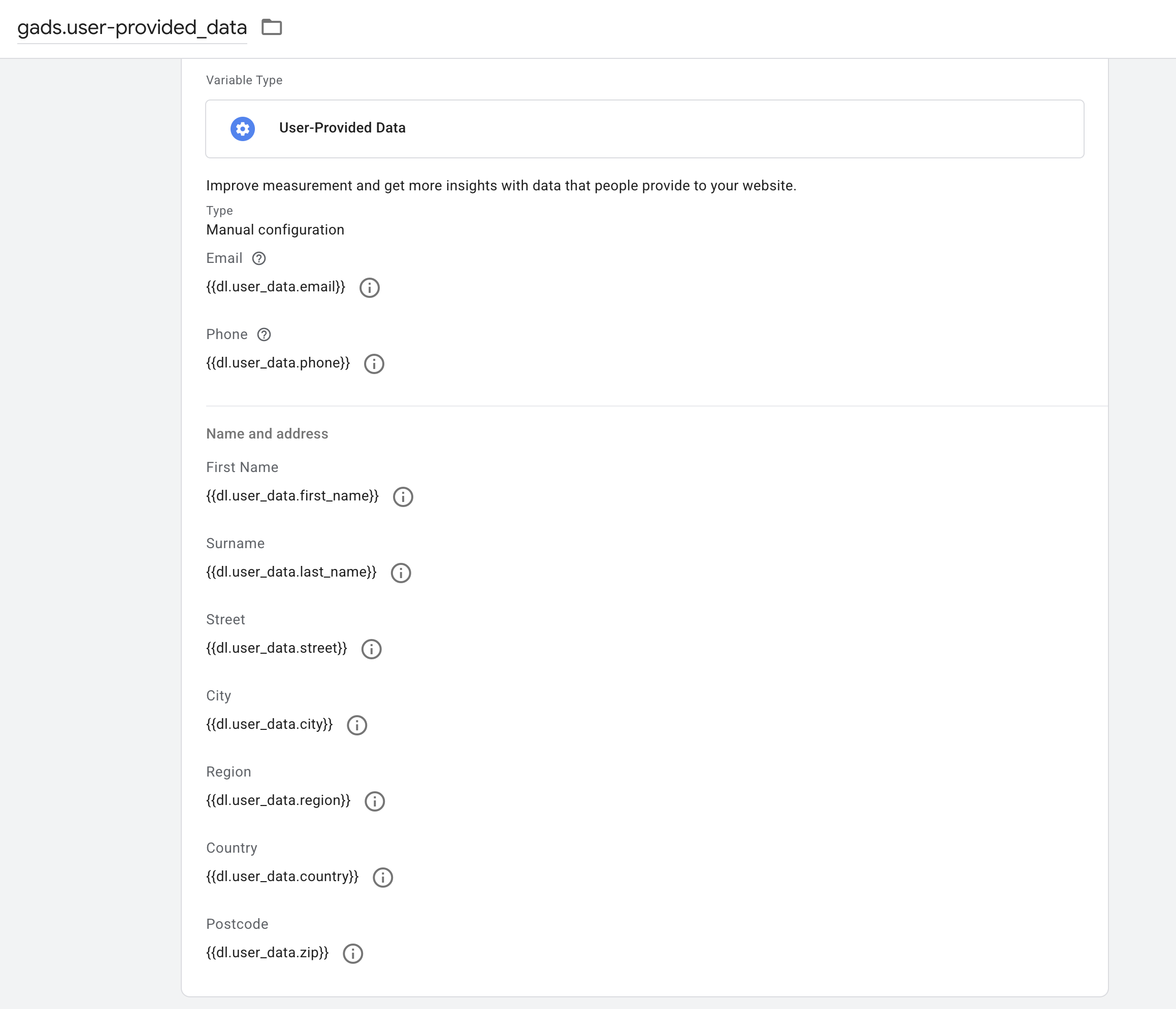

3. For Google Ads, use these variables to populate the “User-Provided Data” variable

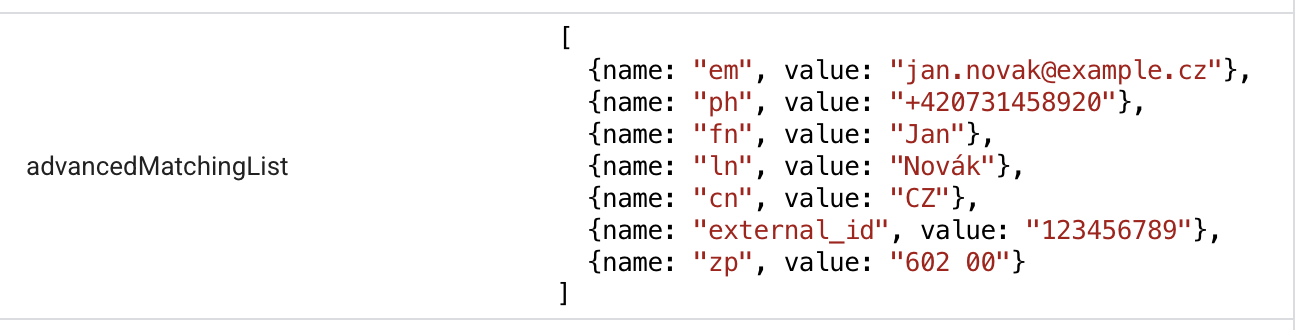

4. In browser GTM, you can configure first-party data sending for Meta tags using the official Meta template, Meta Pixel. Simply check “Enable Advanced Matching” and then fill in the relevant fields:

5. To send first-party data to the server, you need to add the User-provided data variable to your transport GA4 tag as part of the config parameters. Alternatively, you can send individual first-party variables separately using another type of transport tag, such as a Data tag.

6. In sGTM, thanks to the previous step, you will receive the user_data object together with the purchase event. This will happen whenever the data is available in the data layer. For measuring conversions in Google Ads, you are done: the tag automatically takes the data from the user_data object. At this point, Google Ads conversion measurement is taken care of: the tag automatically reads the data from the user_data object.

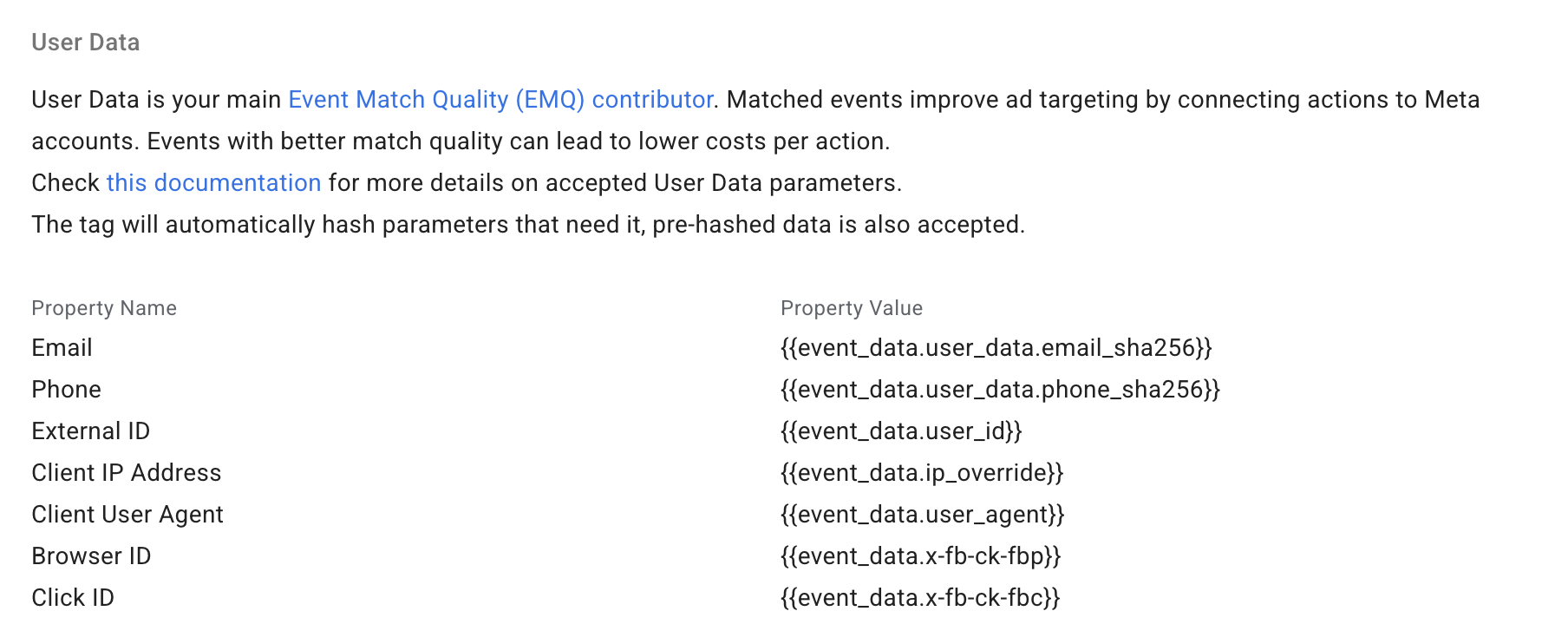

Now all that remains is to configure the sending of user data for Meta Ads server-side tags, whether you use the Meta template or the Stape template.

After deployment, it is a good idea to validate that everything is working as expected: that the data is arriving in the correct format and being sent correctly.

In GTM preview mode:

-> web GTM

-> Check that user_data is being populated correctly, both as individual values and as an object:

-> Also check that the data is being sent to the Meta tag and to sGTM in conversion tags:

With this, you have the basic architecture ready: data flows from the dataLayer through sGTM into both Google Ads and Meta, and it is correctly normalized and hashed. That is the harder part.

But how do you know whether it actually works?

A low match rate can have three different causes, and each one requires a different solution. In the next part, we will go through debugging: how to read signals in GTM preview, what Google Tag Diagnostics can tell you, and how to interpret the Event Match Quality score in Meta. In other words, how to verify that all the work you have just done is actually producing results.